LLM Structured Outputs in Production: Stop Parsing JSON with Regex

If your production codebase contains a function called try_parse_llm_response that runs re.search(r'\{.*?\}', response, re.DOTALL), you are not alone — and you are also in 2023. In 2026, parsing LLM output with regex is the equivalent of hand-rolling your own HTTP client. The ecosystem has moved on, and the gap between teams that know it and teams that don't is widening fast.

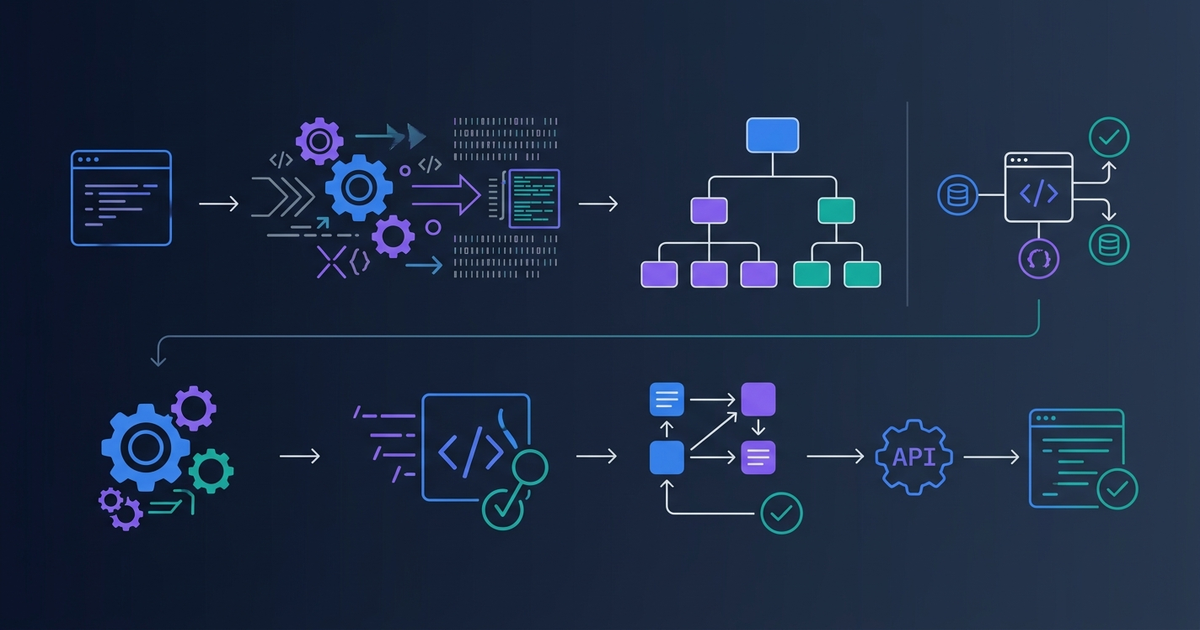

This guide covers everything you need to ship reliable JSON from LLMs: why constrained decoding changed the game, how every major provider implements it, the schema-first development pattern that has become industry standard, and the pitfalls that still catch experienced teams off guard.

Why This Matters Now

Structured output is no longer a "nice to have" for LLM applications. Three trends have made it a production requirement:

Agent pipelines depend on it. When an LLM call is in the middle of a multi-step workflow, a malformed response doesn't just break one request — it corrupts the entire downstream chain. A retry loop with regex fallbacks adds latency and cost at exactly the moment reliability matters most.

Type safety expectations have caught up. In 2024, teams accepted Any return types from LLM calls as an unavoidable tradeoff. In 2026, TypeScript and Python teams expect the same type safety from LLM calls as from any other API. The tooling now delivers that.

The API guarantees are real. OpenAI introduced strict structured outputs in August 2024. Anthropic hit public beta in November 2025 and reached GA in early 2026. Google Gemini, Cohere, and xAI followed. When providers say "guaranteed schema compliance," they mean it — not "usually valid JSON." The guarantee is enforced at generation time, not post-processing.

The Three Eras of LLM Output Parsing

Understanding why current tooling exists requires knowing what it replaced.

Era 1 — String parsing (2023): Prompt the model to return JSON, extract with regex, pray. Failure rate in production: 5–15% depending on model and schema complexity. The workaround was retry loops with increasingly desperate prompts.

Era 2 — JSON Mode (late 2023–2024): Providers offered a response_format: { type: "json_object" } flag. The model would return valid JSON — but not necessarily JSON that matched your schema. You still got {"name": null} when you expected {"name": "string"}. Validation errors still happened. JSON Mode was progress, but it only guaranteed syntactic validity, not semantic correctness.

Era 3 — Constrained Decoding / Native Structured Outputs (2024–2026): The schema is enforced at the token generation level. Invalid tokens are masked before they can be sampled. The output is guaranteed to match your schema. Not "usually." Every time.

JSON Mode is now considered a legacy feature. If you are using it in new code, stop.

How Constrained Decoding Works

The mechanism behind native structured outputs is elegant: your JSON Schema is compiled into a finite state machine (FSM). At each decoding step, a logit processor sits between the model's probability distribution and the sampling function. It tracks the current FSM state and masks the logits of any token that would produce schema-invalid output.

The result: the model can only produce tokens that keep the output on a valid path through your schema. It cannot emit a string where you declared an integer. It cannot omit a required field. It cannot add fields you didn't define (when additionalProperties: false is set).

For local models, libraries like Outlines implement this directly. For API providers, the FSM logic runs server-side — you just pass the schema and get a compliant response back.

This is why strict mode is fundamentally different from JSON Mode. JSON Mode runs a grammar check after generation. Constrained decoding shapes the generation itself.

Schema-First Development: The 2026 Standard

The shift in development practice is as important as the technical mechanism. Schema-first development means:

- Define your data structure in Pydantic (Python) or Zod (TypeScript) first

- Generate the JSON Schema from that definition automatically

- Build your prompts to match the schema — not the other way around

- Validate all LLM output against the same model before it touches your application logic

This pattern eliminates an entire class of bugs where the prompt and the parser disagree about the expected shape.

Python: Pydantic

Pydantic is the de facto standard for structured LLM output in Python. Define your model once, and Pydantic generates the JSON Schema, validates responses, and gives you type-safe objects.

from pydantic import BaseModel, Field

from typing import Optional

from openai import OpenAI

class ProductReview(BaseModel):

sentiment: str = Field(description="positive, negative, or neutral")

score: int = Field(ge=1, le=10, description="Rating from 1 to 10")

summary: str = Field(max_length=200)

key_issues: list[str] = Field(default_factory=list)

would_recommend: bool

client = OpenAI()

response = client.beta.chat.completions.parse(

model="gpt-4.1",

messages=[

{"role": "system", "content": "Extract a structured product review."},

{"role": "user", "content": "The battery life is terrible and it crashes constantly. Would not buy again."}

],

response_format=ProductReview,

)

review = response.choices[0].message.parsed

# review is now a typed ProductReview object

print(review.sentiment) # "negative"

print(review.score) # e.g. 2

print(review.would_recommend) # False

Notice .parse() instead of .create(). The OpenAI Python SDK's parse() method takes a Pydantic model directly, handles the JSON Schema conversion, and returns a typed object. No json.loads(). No try/except.

TypeScript: Zod

Zod 4 (released early 2026) introduced native JSON Schema conversion, making it the TypeScript equivalent of Pydantic for LLM workflows:

import { z } from 'zod';

import OpenAI from 'openai';

import { zodResponseFormat } from 'openai/helpers/zod';

const ProductReview = z.object({

sentiment: z.enum(['positive', 'negative', 'neutral']),

score: z.number().int().min(1).max(10),

summary: z.string().max(200),

keyIssues: z.array(z.string()).default([]),

wouldRecommend: z.boolean(),

});

const client = new OpenAI();

const response = await client.beta.chat.completions.parse({

model: 'gpt-4.1',

messages: [

{ role: 'system', content: 'Extract a structured product review.' },

{ role: 'user', content: 'Battery dies in 2 hours and the screen flickers. Returning it.' },

],

response_format: zodResponseFormat(ProductReview, 'product_review'),

});

const review = response.choices[0].message.parsed;

// TypeScript knows review is z.infer<typeof ProductReview>

Both examples follow the same pattern: define schema once, send to API, receive typed object.

Provider Support in 2026

| Provider | Structured Outputs GA | Schema Enforcement | Refusal Handling | Local Support |

|---|---|---|---|---|

| OpenAI | August 2024 | Constrained decoding | message.refusal field | No |

| Anthropic Claude | Early 2026 | Constrained decoding | Tool call error type | No |

| Google Gemini | 2024, expanded 2026 | Constrained decoding | Safety filter object | No |

| xAI Grok | 2025 | Constrained decoding | Refusal object | No |

| Ollama (local) | 2024 | Grammar-based FSM | N/A (no policy layer) | Yes |

| vLLM / SGLang | 2024 | Grammar-based FSM | N/A | Yes |

OpenAI: The Reference Implementation

OpenAI's structured output is the most mature and the one most documentation references. The key requirements for strict mode:

strict: truein the response formatadditionalProperties: falseon every object in the schema- Every property must appear in

required(optional fields useUnion[type, None]/ nullable types)

Failing to set additionalProperties: false causes some model versions to silently fall back to non-strict validation. This is one of the most common silent failure modes in production.

Anthropic Claude

Anthropic's structured output approach is architecturally different from OpenAI's. Claude uses the tool use mechanism rather than a dedicated response_format parameter. You define your schema as a tool with input_schema, then instruct the model to call that tool:

import anthropic

from pydantic import BaseModel

class ExtractedData(BaseModel):

company: str

revenue_usd_millions: float

year: int

client = anthropic.Anthropic()

response = client.messages.create(

model="claude-sonnet-4-6",

max_tokens=1024,

tools=[{

"name": "extract_financial_data",

"description": "Extract structured financial data from text",

"input_schema": ExtractedData.model_json_schema()

}],

tool_choice={"type": "tool", "name": "extract_financial_data"},

messages=[{

"role": "user",

"content": "Acme Corp reported $2.3B in revenue for fiscal year 2025."

}]

)

tool_use = next(b for b in response.content if b.type == "tool_use")

data = ExtractedData(**tool_use.input)

The tool_choice force-selection ensures Claude always calls your extraction tool rather than responding with text. Anthropic's implementation uses constrained decoding on their end — the guarantee is the same as OpenAI's strict mode.

The Instructor Library: One API for All Providers

If your application uses multiple LLM providers — which most production systems do in 2026 — managing provider-specific structured output APIs becomes friction. Instructor solves this.

Instructor patches any supported client (OpenAI, Anthropic, Gemini, Cohere, Groq, and more) with a unified structured output interface built on Pydantic:

import instructor

import anthropic

from pydantic import BaseModel

# Works identically for openai.OpenAI(), google.generativeai, etc.

client = instructor.from_anthropic(anthropic.Anthropic())

class UserProfile(BaseModel):

name: str

age: int

occupation: str

profile = client.chat.completions.create(

model="claude-sonnet-4-6",

max_retries=3, # Automatic retry with validation error feedback

messages=[{"role": "user", "content": "John is a 34-year-old software engineer."}],

response_model=UserProfile,

)

# profile is a validated UserProfile instance

Instructor's max_retries is particularly valuable: if validation fails (which can still happen for complex schemas with non-strict providers), Instructor automatically sends the validation error back to the model as context for a retry. This reduces the failure rate to near-zero even for providers without native constrained decoding.

With over 3 million monthly downloads and 11,000+ GitHub stars, Instructor is the safe default for any new Python project.

Handling Refusals: The Failure Mode Teams Miss

Strict mode introduces a new failure mode that doesn't exist in the regex era: refusals. When the model declines to respond due to content policy, it returns a structured refusal object — not a parse error. Teams that don't handle this explicitly end up with cryptic validation errors in production.

# WRONG: Will crash on refusal with a confusing error

review = response.choices[0].message.parsed

# RIGHT: Check for refusal first

message = response.choices[0].message

if message.refusal:

# Handle gracefully — log, return fallback, alert, etc.

raise RefusalError(f"Model refused: {message.refusal}")

review = message.parsed

For Anthropic's tool-use approach, refusals surface as a different content block type. Always check response.stop_reason and iterate response.content blocks defensively:

if response.stop_reason != "tool_use":

# Model responded with text instead of calling the tool

text_content = next((b.text for b in response.content if b.type == "text"), None)

raise RefusalError(f"Unexpected response: {text_content}")

Treating refusals as first-class error types — not edge cases — is the mark of production-grade structured output handling.

Common Mistakes in Production

1. Using JSON Mode on new code. JSON Mode guarantees syntactic validity but not schema compliance. If you have a schema, use strict structured outputs.

2. Forgetting additionalProperties: false. OpenAI's strict mode requires this on every nested object. Missing it on a nested type causes silent schema-non-compliance in some configurations.

3. Making optional fields actually optional without null. In strict mode, every field must be in required. To make a field optional, use Optional[str] (Python) or .nullable() (Zod) — this creates a Union[str, null] type that satisfies the strict mode requirement while allowing null values.

4. Skipping validation on "guaranteed" output. Constrained decoding guarantees schema compliance. It does not guarantee semantic correctness — a model can perfectly comply with {"sentiment": "positive"} while describing a clearly negative review. Always validate business logic beyond the schema.

5. Not handling streaming. For long responses, structured output works with streaming too. OpenAI's SDK provides stream_and_parse(). Don't disable streaming for structured outputs — use the streaming-aware APIs.

6. Assuming all models support all schema features. Complex schemas with deeply nested objects, anyOf, or $ref references have varying support across providers and model versions. Test your specific schema against your target model in staging.

FAQ

Q: Is constrained decoding slower than regular generation?

At inference time, the FSM lookup at each step adds minimal overhead — typically under 2% latency increase for typical JSON schemas. The real cost savings come from eliminated retries: a strict structured output call that always succeeds on the first try is dramatically faster than a JSON Mode call with a 10% retry rate.

Q: Can I use structured outputs with streaming?

Yes. All major providers support streaming with structured outputs. The response is streamed token by token, but the full output is only parsed after the stream completes (since partial JSON is by definition invalid). OpenAI's Python SDK provides client.beta.chat.completions.stream() with a parse() method on the final event.

Q: What happens if my schema is too complex?

OpenAI's strict mode supports most JSON Schema features but has some limitations: $ref within union types, certain anyOf patterns, and deeply recursive schemas may not be supported. Check OpenAI's schema compatibility matrix. For complex schemas, Instructor's retry mechanism handles most edge cases by simplifying validation failures into feedback prompts.

Q: Should I use structured outputs for every LLM call?

No. Structured outputs are for calls where you need to extract typed data or drive application logic. For conversational responses, creative generation, or any output that a human reads directly, free-form text is more appropriate. Force-fitting a schema onto a conversational response constrains the model unnecessarily.

Q: How do structured outputs work with local models?

Local inference frameworks like Ollama, vLLM, and SGLang support grammar-based constrained decoding. In Ollama, pass a format parameter with your JSON Schema. In vLLM, use guided_json. The Outlines library provides a Python interface for constrained decoding across multiple local backends.

Key Takeaways

- JSON Mode is legacy. Strict structured outputs enforce schema compliance at generation time, not post-processing.

- Schema-first is now standard. Write your Pydantic or Zod model first; generate your JSON Schema from it automatically.

- All major API providers ship native structured outputs as of early 2026 — OpenAI, Anthropic, Gemini, Cohere, and xAI.

- Refusals are a first-class failure mode. Always check

message.refusalbefore parsing OpenAI responses; checkstop_reasonfor Anthropic. - Instructor is the safe default for multi-provider Python projects — unified interface, automatic retries, 3M+ monthly downloads.

- Constrained decoding guarantees schema compliance, not semantic accuracy. Your application still owns business logic validation.

If you're writing regex to parse LLM output in 2026, you're paying a reliability tax that no longer needs to exist. Every major provider now ships constrained decoding with schema guarantees. Start with Pydantic or Zod, send your schema as the response format, and let the infrastructure handle the rest. The migration from JSON Mode to strict structured outputs is a one-afternoon project that eliminates an entire class of production failures.

Prefer a deep-dive walkthrough? Watch the full video on YouTube.

Need content like this

for your blog?

We run AI-powered technical blogs. Start with a free 3-article pilot.