Think@n: Cut LLM Inference Cost 49% with Deep-Thinking Ratio

A February 2026 paper from researchers at the University of Virginia and Google proposes a deceptively simple idea: instead of letting all candidate responses run to completion before voting, reject the weak ones early — after just 50 tokens — using a signal called the Deep-Thinking Ratio (DTR). The result is inference cost cut by roughly half with no accuracy loss.

The paper is arXiv:2602.13517, "Think Deep, Not Just Long: Measuring LLM Reasoning Effort via Deep-Thinking Tokens."

The Core Problem with Token-Length Reasoning

Modern reasoning LLMs generate long chains of thought. A common assumption is that longer responses indicate harder thinking. The paper argues this is wrong.

Their finding: increased token count does not consistently correlate with accuracy, and sometimes signals "overthinking" — where the model rambles toward a wrong answer rather than reasoning toward a correct one. Raw length is a noisy proxy for reasoning quality.

What Is a Deep-Thinking Token?

A deep-thinking token is one where the model's internal prediction (the distribution over next tokens) changes significantly between earlier and later transformer layers before converging.

In a standard transformer forward pass, each layer refines the representation of each token. For most tokens, the prediction stabilizes quickly — early and late layers agree. For some tokens, there's substantial revision as deeper layers reconsider the prediction. These are deep-thinking tokens.

The Deep-Thinking Ratio (DTR) is the fraction of tokens in a sequence that qualify as deep-thinking:

DTR = (number of deep-thinking tokens) / (total tokens generated so far)

A high DTR means the model is genuinely working through the problem. A low DTR suggests shallow pattern-matching or repetitive filler.

Think@n: The Filtering Algorithm

Standard self-consistency (SC) generates n candidate responses to a question, then uses majority voting to pick the final answer. The problem: you pay for all n full responses before voting.

Think@n modifies this:

- Start generating n candidate responses in parallel.

- After each candidate's first k tokens (the paper uses k=50), compute the DTR for that prefix.

- Reject candidates with DTR below a threshold. Stop generating them.

- Continue only the high-DTR candidates to completion.

- Majority vote on the surviving candidates.

Because weak candidates are cut off at 50 tokens instead of running to hundreds or thousands, the total token budget drops sharply.

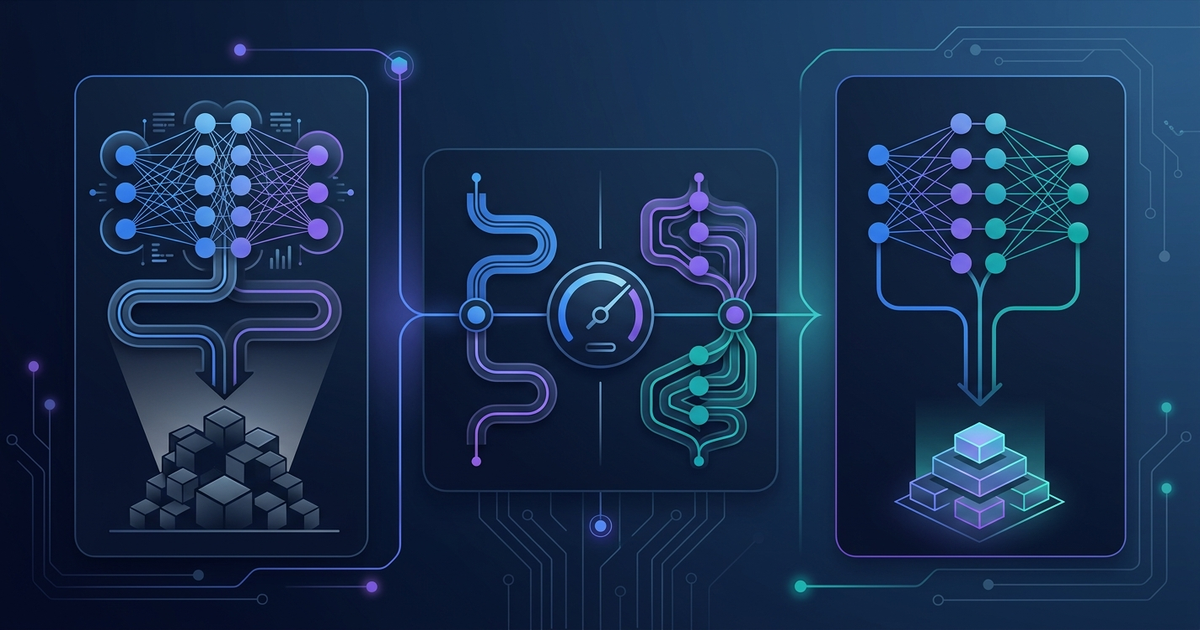

Here is a simplified illustration of the logic:

import random

def simulate_dtr(prefix_tokens: int, quality: float) -> float:

"""

Approximate DTR for a candidate — in practice this requires

access to transformer hidden states across layers.

quality: ground-truth signal (1.0 = high-quality candidate)

"""

base = quality * 0.6

noise = random.gauss(0, 0.1)

return max(0, min(1, base + noise))

def think_at_n(

n: int,

prefix_length: int = 50,

dtr_threshold: float = 0.35,

full_response_tokens: int = 500,

) -> dict:

candidates = []

prefix_tokens_used = 0

full_tokens_used = 0

for i in range(n):

quality = random.random() # simulated — real quality is unknown

dtr = simulate_dtr(prefix_length, quality)

prefix_tokens_used += prefix_length

if dtr >= dtr_threshold:

full_tokens_used += full_response_tokens

candidates.append({"id": i, "dtr": dtr, "quality": quality})

sc_tokens = n * full_response_tokens

think_at_n_tokens = prefix_tokens_used + full_tokens_used

savings_pct = (1 - think_at_n_tokens / sc_tokens) * 100

return {

"n": n,

"surviving_candidates": len(candidates),

"sc_tokens": sc_tokens,

"think_at_n_tokens": think_at_n_tokens,

"token_savings_pct": round(savings_pct, 1),

}

result = think_at_n(n=8, dtr_threshold=0.35)

print(result)

# Example output:

# {'n': 8, 'surviving_candidates': 4, 'sc_tokens': 4000,

# 'think_at_n_tokens': 2400, 'token_savings_pct': 40.0}

This is a conceptual simulation — real DTR computation requires access to per-layer hidden states in the transformer, not just output tokens. The reference implementation works with model weights directly.

Results from the Paper

On AIME 25 (a hard math benchmark):

| Method | Accuracy | Total Tokens |

|---|---|---|

| Self-consistency (n=8) | 62.3% | ~16,000 |

| Think@n (k=50, n=8) | 62.7% | ~8,200 |

Think@n slightly exceeds SC accuracy while using 49% fewer tokens. The paper finds this holds across multiple reasoning models and benchmarks.

A counterintuitive finding: using a 50-token prefix to estimate DTR works better than using longer prefixes (100 or 200 tokens). The first 50 tokens contain enough signal — longer prefixes start introducing noise from filler text.

Why DTR Works as an Early Signal

The intuition: when a model starts a response by immediately engaging with the core structure of the problem, its early layers show high revision activity (deep-thinking tokens). When a model starts with boilerplate preamble or restates the question, there is little revision — early tokens are shallow.

This is analogous to how an experienced developer, when tackling a hard problem, often starts typing core logic immediately rather than writing a preamble comment. The shape of the first 50 tokens reveals intent.

Practical Implications

For developers running LLM pipelines with self-consistency:

- Cost: if you currently generate 8 candidates and pick the majority answer, Think@n can cut your inference bill by roughly half for the same quality.

- Latency: early rejection means some response slots finish faster, which can reduce tail latency in batched workloads.

- Applicability: DTR requires access to hidden states — it is not available through standard API completions. You need to run your own model or use a provider that exposes internal states. For API-only setups, a simpler proxy (token entropy from output logprobs, if available) may partially approximate DTR.

Access

- Paper: arXiv:2602.13517

- Reference implementation: compchap/Think-Deep-Not-Long

Sources

Need content like this

for your blog?

We run AI-powered technical blogs. Start with a free 3-article pilot.