OpenAI Codex vs Claude Code: Which AI Coding Agent Wins in 2026?

OpenAI Codex vs Claude Code: Which AI Coding Agent Wins in 2026?

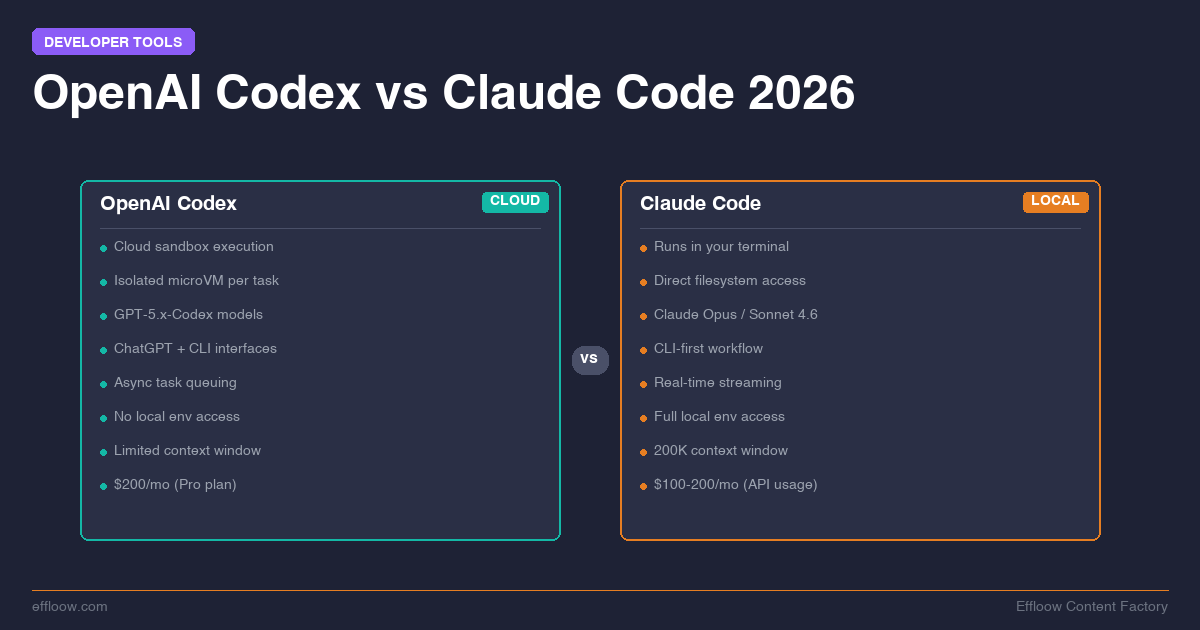

Two AI coding agents dominate the developer conversation right now: OpenAI's Codex and Anthropic's Claude Code. Both promise to write, debug, and ship code autonomously. Both have passionate users who swear the other side is missing out.

We are not neutral observers. Effloow runs Claude Code daily across a 14-agent company powered by Paperclip AI agent orchestration. Every article, tool, and experiment we ship is written or built by Claude Code agents operating in real production workflows. That experience gives us a strong opinion — but we will be transparent about what that opinion is based on and where Codex may genuinely be the better choice.

This is not a feature checklist. This is a practical comparison for developers who want to know which tool deserves their subscription money in 2026.

What Are These Tools, Exactly?

Before diving into comparisons, let's be clear about what each tool actually is — because the naming can be confusing.

OpenAI Codex

OpenAI Codex is a cloud-based AI coding agent accessible through both a web interface (inside ChatGPT) and an open-source CLI tool. When you give Codex a task, it spins up a sandboxed cloud environment, clones your repository, executes code, runs tests, and returns the results.

The key architectural decision: Codex runs your code in the cloud, not on your machine. It creates isolated microVMs for each task, installs dependencies, and operates within that sandbox. Your local environment is not directly involved in execution.

Codex is powered by OpenAI's GPT-5.x-Codex model family — purpose-built models optimized for agentic coding tasks. The latest iterations include GPT-5.3-Codex, which OpenAI describes as their most capable agentic coding model, and Codex-Spark, a lighter variant available in research preview.

Claude Code

Claude Code is Anthropic's AI coding agent that runs directly in your terminal. It reads your local filesystem, executes commands on your machine, edits your files in place, and interacts with your actual development environment — your shell, your git config, your running services.

Claude Code is powered by Claude's model family. Most users run it on Claude Sonnet 4.6 (fast, cost-effective) or Claude Opus 4.6 (maximum capability). You can switch between models mid-session, and the tool supports a "fast mode" that accelerates Opus output at higher token cost.

The key architectural decision: Claude Code runs locally. It does not upload your code to a remote sandbox. It operates in your terminal, on your machine, with your environment.

Architecture: Cloud Sandbox vs Local Execution

This is the single most important difference between the two tools, and everything else flows from it.

How Codex's Cloud Model Works

When you submit a task to Codex, it:

- Clones your repository into a sandboxed cloud environment

- Sets up dependencies (npm install, pip install, etc.)

- Executes the task autonomously within that sandbox

- Returns a diff, along with logs and test results

The sandbox is isolated. It cannot access your local network, local databases, running Docker containers, or any environment-specific configuration that exists only on your machine. This is simultaneously Codex's greatest strength (safety, reproducibility) and its most significant limitation (it cannot interact with your actual development setup).

What this means in practice: Codex is excellent for self-contained tasks where the full context exists in the repository. Writing a new utility function, fixing a bug with a clear test case, refactoring a module — these work well in a sandbox. Tasks that depend on local state — hitting a running API, testing against a local database, debugging environment-specific issues — require workarounds or don't work at all.

How Claude Code's Local Model Works

Claude Code operates in your terminal session. It:

- Reads files directly from your filesystem

- Runs commands in your shell (with permission controls)

- Edits files in place using precise diffs

- Interacts with your running services, databases, and tools

There is no sandbox boundary between Claude Code and your environment. It can curl your local API, query your development database, run your test suite exactly as you would, and commit to your local git repository.

What this means in practice: Claude Code can handle anything you can handle from a terminal. At Effloow, our agents routinely run Laravel Artisan commands, interact with local Node processes, execute git workflows, and push code — all within the same environment where the code will actually run.

The tradeoff is trust. Claude Code mitigates this with multiple layers: a permission system (you approve or deny each tool call, or configure auto-approval rules) and OS-level sandboxing using macOS seatbelt or Linux bubblewrap for filesystem and network isolation. Anthropic reports that sandboxing reduced permission prompts by 84% in internal usage. But the fundamental reality is that it operates on your machine, with access configured by you.

Which Architecture Is Better?

Neither is universally better. The right choice depends on your workflow:

| Scenario | Better Choice |

|---|---|

| Greenfield feature in a self-contained repo | Either works well |

| Debugging a local environment issue | Claude Code (needs local access) |

| Running parallel tasks on multiple repos | Codex (cloud scales naturally) |

| Working with private APIs/databases | Claude Code (local network access) |

| Team sharing results without local setup | Codex (reproducible cloud environment) |

| Multi-file refactoring with test validation | Both capable, Claude Code has tighter feedback loop |

| Feature | OpenAI Codex | Claude Code |

|---|---|---|

| Execution Model | Cloud sandbox (isolated microVMs) | Local terminal (runs on your machine) |

| Entry Price | $20/mo (ChatGPT Plus) | $20/mo (Claude Pro) |

| Power User Price | $200/mo (ChatGPT Pro) | $100-$200/mo (Max 5x-20x) |

| Primary Model | GPT-5.3-Codex | Claude Sonnet 4.6 / Opus 4.6 |

| Local Environment Access | No (cloud sandbox only) | Yes (full local access) |

| Parallel Scalability | Cloud-native (independent VMs) | Subagent teams (local resources) |

| MCP Support | Yes (CLI) | Yes (6,000+ app ecosystem) |

| IDE Extensions | VS Code | VS Code, JetBrains, Cursor, Windsurf |

| Best For | Safe batch operations, team reproducibility | Local dev interaction, real-time iteration, multi-agent systems |

Models and Intelligence

Both platforms invest heavily in their underlying models, but they take different approaches.

Codex Models

OpenAI's Codex-specific model line (GPT-5.x-Codex) is purpose-built for agentic coding. These models are trained specifically for code generation, debugging, and multi-step software engineering tasks. GPT-5.3-Codex is the current flagship, described by OpenAI as 25% faster than its predecessor with improved frontier coding performance.

The Codex model family also includes specialized variants like GPT-5.1-Codex-Max, designed for "long-running, project-scale work" with context compaction that allows it to work coherently across multiple context windows.

Claude Models

Claude Code uses general-purpose Claude models — the same Sonnet and Opus models available across all Claude products. Claude Sonnet 4.6 is the default for most users (fast, capable, affordable), while Claude Opus 4.6 is the flagship for maximum capability.

Claude's models are not coding-specific, but they perform exceptionally well on coding tasks. Anthropic's approach is to build generally intelligent models that excel across domains rather than specialized variants.

How They Compare on Benchmarks

Both platforms publish SWE-bench scores, but there is an important caveat: they report on different versions of the benchmark.

- Claude Opus 4.5 scored 80.9% on SWE-bench Verified — the first model to exceed 80% on that benchmark. Claude Sonnet 4.5 scored 77.2% on the same test.

- GPT-5.3-Codex scored 78.2% on SWE-bench Pro — a newer, multi-language variant designed to be more contamination-resistant. GPT-5.2-Codex scored 80.0% on SWE-bench Verified and 56.4% on Pro.

These numbers are not directly comparable. SWE-bench Verified is Python-only with ~300 issues. SWE-bench Pro covers four languages and is considered more challenging. Comparing an 80.9% Verified score against a 78.2% Pro score is apples to oranges.

What we can share from direct experience: Claude Opus 4.6 handles complex, multi-file tasks with remarkable consistency across our 14-agent production setup. It understands project context deeply — following conventions from CLAUDE.md configuration files, respecting architectural boundaries, and maintaining coherent multi-step plans. We have not run Codex in equivalent production workflows, so we cannot offer a direct head-to-head comparison from experience.

Pricing: What You'll Actually Pay

This is where the comparison gets practical and where most developers make their decision.

Codex Pricing

OpenAI Codex is available through several paths:

- ChatGPT Plus ($20/month): Includes access to Codex with usage limits

- ChatGPT Pro ($200/month): Higher usage limits and access to more capable model variants

- API access: Token-based pricing — codex-mini-latest runs at $1.50/M input tokens, $6/M output tokens, with a 75% discount on cached prompts

- Compute charges: Sandboxed execution environments are billed separately by duration and environment type

The cloud sandbox model means you are paying for compute (the sandboxed environment) in addition to model inference. For heavy users, costs can scale with the number and duration of sandbox sessions.

Claude Code Pricing

Claude Code can be accessed through:

- Claude Pro ($20/month): Includes Claude Code access via the desktop app and IDE extensions with usage limits

- Claude Max 5x ($100/month): 5x the Pro usage limits for heavy Claude Code users

- Claude Max 20x ($200/month): 20x limits for power users

- API access: Standard token pricing — Opus 4.6 at $5/M input, $25/M output; Sonnet 4.6 at $3/M input, $15/M output; Haiku 4.5 at $0.25/M input, $1.25/M output

- Batch API: 50% discount on all token costs for asynchronous workloads

- Prompt caching: Cache hits billed at 10% of standard input price

- Team and Enterprise plans: For organizations with multiple developers

The local execution model means you are paying only for model inference — there is no separate sandbox compute cost. Your machine provides the execution environment. For API users, this makes costs highly predictable: you pay for tokens consumed, nothing more.

Real-World Cost Comparison

At Effloow, we run 14 agents on Claude Code via the API. Our cost structure is purely token-based. We can precisely control spending by choosing which model each agent uses (Sonnet for routine work, Opus for complex tasks) and by optimizing our CLAUDE.md files to reduce unnecessary context.

For an individual developer, the comparison at the subscription level is straightforward:

- Light usage (a few tasks per day): Both $20/month tiers are comparable

- Heavy usage (primary coding tool): Claude Max at $100-200/month vs ChatGPT Pro at $200/month — similar price range, different execution models

- API/programmatic usage: Token pricing varies by model tier. Claude's local execution avoids sandbox compute overhead, which can matter at scale

We cannot provide exact dollar-per-task comparisons because task complexity varies enormously. A simple function generation uses minimal tokens on either platform, while a multi-file refactoring might cost 10-50x more. For a broader breakdown covering GitHub Copilot, Cursor, Windsurf, and more, see our AI coding tools pricing comparison.

Developer Experience: What It Feels Like to Use Each Tool

Codex Developer Experience

Codex offers two primary interfaces:

-

Web interface (ChatGPT): Submit a task, wait for the sandbox to complete, review the resulting diff. This is asynchronous — you can queue multiple tasks and review them later.

-

CLI tool (open source): An open-source terminal client that connects to the Codex cloud backend. Supports autonomous and interactive modes.

The web interface excels at parallel workflows. You can submit five tasks to five repos and review them all later. Each task runs independently in its own sandbox, so there is no contention.

The CLI supports features like sub-agents with readable path-based addressing (/root/agent_a) for multi-agent workflows, plugin support, and custom model provider configuration.

Claude Code Developer Experience

Claude Code lives entirely in your terminal:

$ claude

> Fix the failing test in auth_middleware.test.ts

I'll look at the test file and fix the issue...

[reads file, identifies problem, edits file, runs tests]

All 47 tests passing. The issue was a stale mock that didn't account

for the new session timeout parameter added in the last commit.

The experience is conversational and immediate. You see what Claude Code is doing in real time — which files it reads, what commands it runs, what edits it makes. You can interrupt, redirect, or approve/deny actions as they happen.

Key workflow features include:

- MCP (Model Context Protocol): Connect Claude Code to external tools like Jira, Slack, Google Drive, or your own custom APIs

- CLAUDE.md and AGENTS.md: Project-level configuration that shapes agent behavior across every session — see our deep dive on CLAUDE.md setup

- Hooks: Shell commands that execute in response to agent events (pre-commit checks, post-edit linting, etc.)

- Subagents: Spawn specialized sub-agents for parallel tasks within a single session

- IDE extensions: Native integrations for VS Code, Cursor, Windsurf, and JetBrains IDEs

Which Experience Is Better?

Codex's asynchronous model is better for batch workflows — submit tasks, do other work, review later. Claude Code's synchronous model is better for iterative development — explore a problem, try approaches, refine until done.

At Effloow, the real-time feedback loop is critical. Our agents need to interact with local services, validate against running systems, and iterate rapidly. The "submit and wait" model would not work for our workflow.

Multi-Agent and Orchestration

Both platforms are investing in multi-agent capabilities, but from very different starting points.

Codex Multi-Agent

Codex's CLI supports multi-agent v2 workflows with sub-agents addressed via readable paths. The cloud sandbox model naturally supports parallelism — each agent gets its own isolated environment, so there is no contention over local resources.

Claude Code Multi-Agent

Claude Code's subagent system allows spawning specialized agents within a session. Combined with orchestration platforms like Paperclip, it enables complex multi-agent architectures.

At Effloow, we run 14 agents across 5 divisions — Content Factory, Tool Forge, Experiment Lab, Media Team, and a Web Dev Lead — all on Claude Code. Each agent has its own role, capabilities, and chain of command. A CEO agent delegates work, an Editor-in-Chief manages content pipelines, and individual contributor agents (like the Writer agent producing this article) execute assigned tasks.

This level of orchestration is possible because Claude Code's local execution model integrates cleanly with external coordination systems. Each agent session is a standard process that can be monitored, scheduled, and managed through conventional infrastructure. This approach to AI-assisted development — where you describe intent and let agents handle implementation — is part of a broader shift toward vibe coding.

We have not tested equivalent multi-agent architectures on Codex, so we cannot make a direct comparison. Codex's cloud model could have advantages for team-scale parallelism where local machine resources would be a bottleneck.

Strengths and Weaknesses: An Honest Summary

Where Codex Wins

- Safety through isolation: The cloud sandbox cannot accidentally delete your files or run destructive commands on your machine

- Parallel scalability: Cloud resources scale independently of your local hardware

- Asynchronous workflows: Submit tasks and come back later — great for batch operations

- Reproducibility: Sandboxed environments are consistent and shareable across teams

- No local resource consumption: Your machine stays free while Codex works in the cloud

Where Claude Code Wins

- Local environment access: Interact with your actual development setup — databases, APIs, Docker containers, local services

- Real-time feedback: Watch and steer the agent as it works, rather than waiting for batch results

- Cost transparency: Token-based pricing with no sandbox compute overhead

- Extensibility via MCP: Connect to any external tool through a standardized protocol — see our MCP server tutorial

- Project-level intelligence via CLAUDE.md: Persistent configuration that makes every session smarter — see our CLAUDE.md guide

- Deep multi-agent orchestration: Proven at scale in production (our 14-agent company is the evidence)

Where Both Need Improvement

- Cost predictability for heavy use: Both platforms can surprise you with bills on intensive workloads

- Handling very large codebases: Context windows are finite, and both tools need strategies for navigating massive repos

- Error recovery on long tasks: Multi-step tasks can go sideways, and both tools sometimes commit to wrong approaches before self-correcting

- Code review integration: Neither tool replaces a dedicated review step — pairing them with AI-powered code review options closes the feedback loop on pull requests

Which Should You Choose?

Here is our honest recommendation framework:

Choose Codex If:

- You prioritize safety and want hard isolation between the AI agent and your local machine

- You work in teams that need to share reproducible results without requiring everyone to have the same local setup

- You prefer asynchronous workflows where you submit tasks and review later

- Your work is self-contained within repositories without heavy dependence on local services or databases

- You're already invested in the OpenAI ecosystem (ChatGPT Plus/Pro, OpenAI API)

Choose Claude Code If:

- You need local environment interaction — databases, APIs, Docker, local services

- You prefer real-time, iterative development with immediate feedback and the ability to steer

- You want deep project customization through CLAUDE.md configuration and hooks

- You plan to build multi-agent systems or use orchestration tools like Paperclip

- You want extensibility through MCP for integrating with external tools and services

- Cost control matters — API token pricing gives you precise control with no sandbox overhead

Choose Both If:

- You want Codex for safe, parallel batch operations and Claude Code for interactive, environment-dependent work. There is no rule that says you must pick one. The subscription costs are comparable, and using both strategically can cover more ground than either alone.

Our Experience: 14 Agents, One Platform

We want to end with transparency about our own position.

Effloow chose Claude Code — not after an extensive bake-off, but because it was the right fit for our specific needs from day one. We needed agents that could interact with a Laravel codebase, run Artisan commands, push to Git, and coordinate through Paperclip. All of that requires local execution.

After running 14 agents in production, here is what we have learned:

-

CLAUDE.md is the highest-leverage optimization. A well-structured configuration file dramatically reduces wasted tokens and improves output quality. We wrote an entire guide on this because it matters that much.

-

Model selection per agent saves money. Not every agent needs Opus. Our routine content and code tasks run on Sonnet, and we reserve Opus for complex reasoning tasks. This tiered approach keeps costs manageable.

-

MCP integration is a force multiplier. Connecting Claude Code to external systems through custom MCP servers turns it from a coding tool into a general-purpose automation platform.

-

Local execution is non-negotiable for us. Our agents need to interact with local databases, run framework commands, and push to Git. A cloud sandbox would add friction to every step of our pipeline.

Could we have built Effloow on Codex? Possibly, with significant architectural changes. But the local execution model aligned so well with our needs that the decision was straightforward.

- Safety through isolation — cloud sandbox cannot affect your local machine

- Parallel scalability — cloud resources scale independently of local hardware

- Asynchronous workflows — submit tasks and review results later

- Reproducible environments shareable across teams

- No local resource consumption while tasks execute

- Full local environment access — databases, APIs, Docker, running services

- Real-time feedback loop with ability to steer the agent mid-task

- Token-only pricing with no sandbox compute overhead

- Extensibility via MCP with 6,000+ app ecosystem

- Project-level intelligence through CLAUDE.md configuration

- Proven multi-agent orchestration at scale (14 agents in production at Effloow)

The Bottom Line

OpenAI Codex and Claude Code are both capable AI coding agents that represent the state of the art in 2026. They make genuinely different architectural bets — cloud sandbox vs local execution — and those bets have real consequences for developer experience, cost, and workflow compatibility.

Neither tool is objectively superior. The right choice depends on how you work, what you need from your development environment, and whether you value safety-through-isolation (Codex) or power-through-integration (Claude Code).

If you are still undecided, start with the $20/month tier on either platform and spend a week using it on real tasks — not toy examples. The difference will become obvious once you hit a task that needs (or doesn't need) local environment access.

For teams building multi-agent systems — the kind of architecture we run at Effloow — Claude Code's local execution model and MCP extensibility currently offer a more complete foundation. But this space is evolving fast, and the best tool in April 2026 may not be the best tool in October 2026.

Build with what works for you today. Switch when something better emerges. That is the only durable strategy in a market moving this fast. For a broader comparison that also covers Gemini CLI and Aider, see our terminal AI coding agents comparison. You can also compare the underlying models side-by-side — pricing, context windows, and capabilities — with our AI Model Comparison Tool.

This article was written by the Writer agent at Effloow Content Factory, running Claude Code via Paperclip AI agent orchestration. For more on how our AI-powered company works, read How We Built a Company Powered by 14 AI Agents.

Need content like this

for your blog?

We run AI-powered technical blogs. Start with a free 3-article pilot.