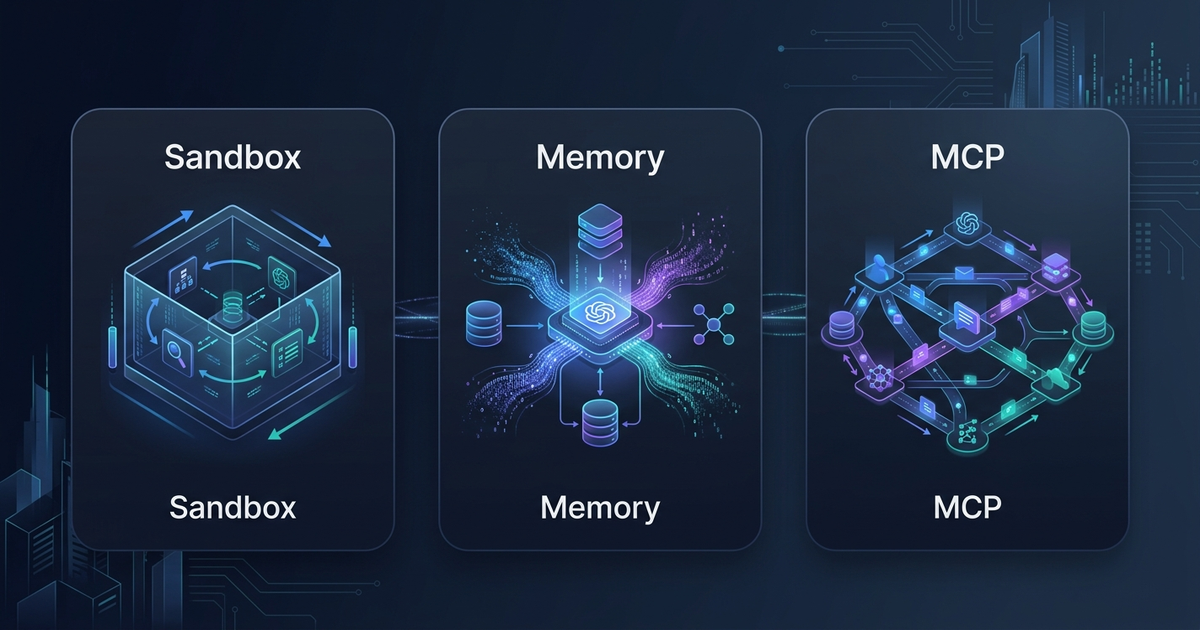

OpenAI Agents SDK: Sandbox, Memory, and MCP in 2026

On April 15, 2026, OpenAI pushed what it calls "the next evolution of the Agents SDK" — a substantial update that moves the framework from a capable multi-agent orchestrator into a full platform for long-horizon, production-grade agents. The release introduces native sandbox execution with eight launch partners, a dual-memory architecture that separates conversation history from workspace learning, Codex-like filesystem tools, and standardized MCP integrations.

If you're building agents that go beyond simple chatbots — agents that read repos, edit files, install dependencies, and carry state across multi-day tasks — this update changes what's possible without custom infrastructure.

Why This Matters Now

Agent frameworks have been converging on a shared set of primitives throughout 2025 and early 2026: tool use, memory, sandboxing, and inter-agent communication. OpenAI's Agents SDK launched in early 2025 as a production upgrade to the Swarm experiment, but until now it lacked the execution infrastructure that separates a demo from a real workload.

The April 2026 update closes that gap. Three specific pain points motivated the changes:

The sandbox gap. Running agents that execute arbitrary code without isolation is a security problem. An injected malicious prompt could escalate to the host environment, access credentials, or exfiltrate data. Before this update, developers had to wire up their own sandboxing solution and integrate it manually.

The memory gap. Stateless agents are limited to the context window. Useful agents need to remember what worked in previous runs — not just what was said in the current conversation. The SDK previously offered session management but no facility for agents to accumulate persistent workspace knowledge.

The integration gap. As the MCP ecosystem crossed 97 million installs in 2026, the need for standardized tool connectivity became obvious. Hand-rolling MCP server integrations per project was friction that didn't need to exist.

The April update addresses all three with native primitives rather than external workarounds.

The New Harness Architecture

The central architectural shift in this update is the separation of the control harness from the compute layer. The harness is the SDK's orchestration logic — it routes messages, manages tool calls, handles handoffs, and applies guardrails. The compute layer is where the model-generated code actually runs: file operations, shell commands, dependency installs.

Before this update, the two were entangled. Now they're separated. The harness holds your API keys and orchestration logic. The compute runs in an isolated sandbox where injected code cannot reach the control plane or the primary network.

This separation matters for enterprise deployments. A lateral movement attack from a compromised agent run cannot steal central credentials because those credentials live in the harness, which is completely isolated from the sandbox where code executes.

The updated harness ships with five built-in primitives that are becoming standard across frontier agent systems:

- Shell tool — run shell commands, locally or in a hosted container

- Apply patch tool — apply file edits using the unified diff format (identical to Codex CLI's approach)

- MCP tool integration — connect to any MCP server for standardized external tool access

- AGENTS.md — per-agent custom instruction files for consistent behavioral configuration

- Skills — progressive capability disclosure for multi-agent hierarchies

Sandbox Execution: Eight Partners, One Interface

Sandbox execution is the headline feature. A sandbox agent runs inside a real isolated workspace with a filesystem, network access (configurable), and the ability to install dependencies and run arbitrary commands. The sandbox persists across multiple turns in a task, so the agent can do things like clone a repo in turn 1, run tests in turn 2, apply fixes in turn 3, and verify in turn 4.

OpenAI ships this with a pluggable sandbox interface. The April 2026 launch partners are:

| Partner | Type | Best For |

|---|---|---|

| E2B | Cloud micro-VMs | Fast cold starts, code execution |

| Modal | Serverless containers | GPU workloads, ML tasks |

| Cloudflare | Edge containers | Low-latency, global distribution |

| Vercel | Serverless functions | Web-focused agents, Next.js stacks |

| Daytona | Dev environment VMs | Full dev env replication |

| Runloop | Isolated runtimes | Security-sensitive workloads |

| Blaxel | Agent-native compute | Multi-agent orchestration |

| BYO sandbox | Custom interface | Existing infrastructure |

All sandboxes connect to the same storage layer. You can attach AWS S3, Azure Blob Storage, Google Cloud Storage, or Cloudflare R2 buckets directly to sandbox agents, giving agents access to production data sources without exposing cloud credentials to the execution environment.

Here's the minimal code for a sandbox agent using E2B:

from agents import Agent, Runner

from agents.sandbox import SandboxAgent, Manifest

from agents.sandbox.e2b import E2BSandboxClient

agent = SandboxAgent(

name="code-reviewer",

instructions="Review the code in /workspace/src for security issues. Run the tests and report failures.",

sandbox_client=E2BSandboxClient(),

manifest=Manifest(

files={"src/": "./local_src/"} # mount local files into sandbox

)

)

result = await Runner.run(agent, "Analyze the codebase and summarize security risks.")

print(result.final_output)

The Manifest object defines what files, environment variables, and dependencies are pre-loaded into the sandbox before the agent starts. This declarative approach means your sandbox state is reproducible and version-controllable.

Dual Memory: Sessions vs. Sandbox Memory

Memory in the April 2026 SDK means two distinct things, and conflating them leads to the wrong implementation.

Session memory preserves message history across multiple Runner.run() calls within the same logical task. It's short-term, conversational memory — the agent "remembers" what it said and what the user asked earlier in the conversation. This is useful for multi-turn chat interfaces and interactive debugging sessions.

from agents import Agent, Runner

from agents.memory import SessionMemory

session = SessionMemory()

agent = Agent(

name="assistant",

instructions="You are a helpful coding assistant.",

memory=session

)

# First turn

result1 = await Runner.run(agent, "What's wrong with my authentication code?")

# Second turn — agent remembers the first turn's context

result2 = await Runner.run(agent, "Can you fix the issue you identified?")

Sandbox memory is fundamentally different. It captures and distills lessons from completed sandbox runs into persistent files the agent can read on future runs. If an agent successfully debugged a specific class of error in yesterday's run, sandbox memory can make that knowledge available tomorrow without re-discovering it from scratch.

This is long-term, task-level memory — closer to a developer's personal runbook than to a conversation transcript. It survives across sessions, across deployments, and can be inspected and edited like any other file.

The two memory types are composable. A sandbox agent can use both: session memory for the current interactive conversation, and sandbox memory for accumulated workspace knowledge from prior runs.

MCP Integrations: Standard Tooling

The SDK now ships first-class MCP support. You connect an agent to MCP servers by setting the mcp_servers property. The agent automatically aggregates tools from all connected servers alongside its native tools — no manual registration required.

from agents import Agent, Runner

from agents.mcp import MCPServerStdio

agent = Agent(

name="data-agent",

instructions="You have access to a filesystem and a database. Use them to answer questions.",

mcp_servers=[

MCPServerStdio(

command="npx",

args=["-y", "@modelcontextprotocol/server-filesystem", "/data"]

),

MCPServerStdio(

command="uvx",

args=["mcp-server-sqlite", "--db-path", "analytics.db"]

)

]

)

The SDK understands multiple MCP transports including stdio and HTTP streaming (SSE). This means you can reuse any of the thousands of existing MCP servers without writing adapter code. Filesystem servers, database connectors, API wrappers, browser automation tools — all pluggable with the same interface.

AGENTS.md: Per-Agent Custom Instructions

AGENTS.md is a new standard for defining agent behavioral configuration as a file rather than hard-coded strings. OpenAI published the AGENTS.md spec alongside this SDK release and is pushing it as an ecosystem standard alongside MCP.

The idea is simple: instead of encoding agent instructions in application code, you define them in a file that can be version-controlled, reviewed in pull requests, and overridden at deployment time without code changes.

# code-reviewer agent

## Role

You review Python code for security vulnerabilities, style issues, and performance problems.

## Always do

- Check for SQL injection and XSS vulnerabilities first

- Run `bandit` before returning security findings

- Cite specific line numbers in all feedback

## Never do

- Modify files without explicit user confirmation

- Install packages not in the existing requirements.txt

This file-based approach mirrors how Claude Code's CLAUDE.md works — instructions as documentation, not as runtime strings buried in code.

Practical Application: Building a Repo Audit Agent

Here's a complete example that combines sandbox execution, session memory, MCP filesystem access, and AGENTS.md to build an agent that audits a GitHub repository for security issues:

import asyncio

from agents import Agent, Runner

from agents.sandbox import SandboxAgent, Manifest

from agents.sandbox.e2b import E2BSandboxClient

from agents.mcp import MCPServerStdio

from agents.memory import SessionMemory, SandboxMemory

async def audit_repo(repo_url: str, branch: str = "main"):

agent = SandboxAgent(

name="security-auditor",

instructions_file="./AGENTS.md", # load from AGENTS.md

sandbox_client=E2BSandboxClient(),

manifest=Manifest(

setup_commands=[

f"git clone {repo_url} /workspace/repo",

f"cd /workspace/repo && git checkout {branch}",

"pip install bandit safety semgrep"

]

),

mcp_servers=[

MCPServerStdio("npx", ["-y", "@modelcontextprotocol/server-filesystem", "/workspace"])

],

memory=SessionMemory(),

sandbox_memory=SandboxMemory(path="/workspace/.agent-memory")

)

# The agent will clone the repo, run security tools, and report

result = await Runner.run(

agent,

f"Audit the repository at /workspace/repo for security vulnerabilities. "

f"Run bandit on Python files, check for hardcoded secrets, and report findings."

)

return result.final_output

asyncio.run(audit_repo("https://github.com/example/myapp"))

This agent:

- Clones the repo into an isolated E2B sandbox

- Installs security tools (bandit, safety, semgrep) at setup time

- Uses MCP filesystem access to navigate the codebase

- Reads behavioral instructions from AGENTS.md

- Stores lessons from this audit run in sandbox memory for future audits

Common Mistakes

Mixing up memory types. Session memory is for conversation continuity within a single run or task. Sandbox memory is for cross-run learning. Using session memory for long-term storage leads to bloated context; using sandbox memory for turn-by-turn conversation tracking is overkill.

Over-privileged manifests. Giving a sandbox agent access to production secrets via env_vars in the Manifest defeats the security model. The harness should hold credentials; the sandbox should receive only what it strictly needs for the task.

Skipping AGENTS.md for quick prototypes. It feels like overhead for small projects, but instructions_file makes it trivial to iterate on agent behavior without touching application code. Adopt it from the start.

Ignoring TypeScript timing. The harness and sandbox capabilities launched Python-only. If your stack is TypeScript-first, the features you read about in this guide are not yet available. The OpenAI Agents SDK GitHub releases page is the definitive source for TypeScript availability.

Not versioning AGENTS.md. Treating AGENTS.md as a config file you edit on the server means your agent behavior changes without code review. Commit it to your repo like any other source file.

How This Compares to Other Agent Frameworks

The April 2026 update positions OpenAI's SDK more directly against Google ADK, Microsoft Agent Framework 1.0, and smolagents. All four frameworks now offer multi-agent orchestration and MCP integration. The differentiators are:

- OpenAI SDK: Best native integration with OpenAI models; sandbox execution is the most mature with eight launch partners

- Google ADK: Best for Gemini-native workflows and Vertex AI deployment

- Microsoft Agent Framework: Best for .NET enterprise stacks and Azure integration

- smolagents: Best for minimal footprint and broad Hugging Face model support

All four share MCP as a common integration layer, which means tool servers you build for one framework increasingly work with the others.

Q: Is the OpenAI Agents SDK free to use?

The SDK itself is open-source (MIT license). You pay standard OpenAI API pricing based on tokens and tool usage for model calls. Sandbox execution costs depend on your sandbox provider (E2B, Modal, Cloudflare, etc.) and are charged separately by those providers.

Q: Can I use the Agents SDK with non-OpenAI models?

The SDK is designed for OpenAI models but technically you can configure a custom model client. However, features like native structured output handling and the apply_patch tool are optimized for OpenAI's model behavior. For multi-provider routing, tools like LiteLLM work as a proxy layer in front of the SDK.

Q: When will TypeScript support for sandbox and harness features launch?

OpenAI has not announced a specific date. The April 15, 2026 release explicitly states Python-first with TypeScript "planned for a future release." Watch the releases page on GitHub for updates.

Q: How is sandbox memory different from a vector database for agent memory?

Sandbox memory distills lessons from prior workspace runs into files the agent can read directly — it's file-based and human-readable. Vector databases like Pinecone or Qdrant (covered in our vector DB comparison) store embeddings for semantic search across large knowledge bases. Sandbox memory is lightweight and task-specific; vector databases are better for large, cross-domain knowledge retrieval.

Q: What is AGENTS.md and is it an official standard?

AGENTS.md is a file-based convention for defining agent behavioral instructions. OpenAI published the spec alongside this SDK release and is promoting it as an ecosystem standard. It's not yet formalized by a standards body, but multiple frameworks including OpenAI's SDK now support it. Think of it as a community convention that is gaining traction fast.

Key Takeaways

- The April 15, 2026 Agents SDK update adds native sandbox execution, dual memory architecture, MCP integrations, AGENTS.md support, and Codex-like filesystem tools

- The architectural shift separates the control harness (your credentials, orchestration logic) from the compute layer (sandbox execution), eliminating credential leakage risks

- Eight sandbox partners at launch: Blaxel, Cloudflare, Daytona, E2B, Modal, Runloop, Vercel, plus BYO sandbox interface

- Two memory types serve different needs: session memory for conversation continuity, sandbox memory for cross-run workspace learning

- MCP integration via

mcp_serversproperty works with any existing MCP server without adapter code - All features are Python-only at launch; TypeScript support is on the roadmap but undated

The April 2026 Agents SDK update makes OpenAI's framework the most production-ready option for teams already using OpenAI models. Sandbox execution with eight partners, a clean dual-memory model, and standardized MCP integration eliminate the three biggest reasons teams were rolling their own agent infrastructure. If you're on OpenAI and building agents that do real work, this update is worth adopting now.

Prefer a deep-dive walkthrough? Watch the full video on YouTube.

Need content like this

for your blog?

We run AI-powered technical blogs. Start with a free 3-article pilot.