Ollama + Open WebUI Self-Hosting Guide 2026 — Run Your Own AI for $0

Ollama + Open WebUI Self-Hosting Guide 2026 — Run Your Own AI for $0

ChatGPT Pro costs $200 a month. Claude Pro costs $20. Even the budget API tiers add up once you start building real workflows.

There is another option: run your own AI locally or on a cheap VPS, with a ChatGPT-style interface, for $0 to $5 a month. No API keys. No usage limits. No data leaving your machine.

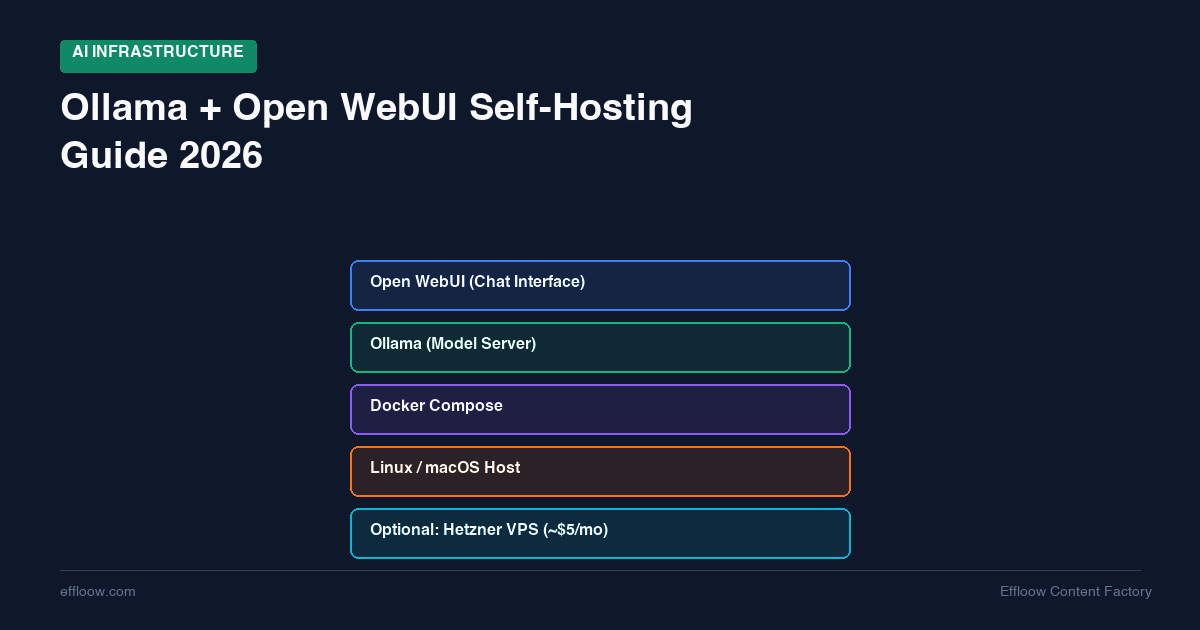

The stack is Ollama for running models and Open WebUI for the browser interface. Ollama has hit 52 million monthly downloads as of Q1 2026 — this is not experimental software anymore. Open WebUI gives you a polished chat interface with conversation history, model switching, document upload, and multi-user support.

This guide covers two paths: a 5-minute local setup for your Mac or Linux machine, and a VPS deployment on Hetzner for when you want 24/7 availability without running your laptop all day. We will be honest about what works well, what does not, and when you should just use an API instead.

Why Self-Host Your Own AI in 2026

Before diving into setup, it helps to understand why self-hosting has become practical this year — and when it actually makes sense.

The Cost Argument

API pricing in early 2026 looks like this:

| Service | Monthly cost at 10M tokens/day |

|---|---|

| GPT-5 | ~$168/month |

| Claude Sonnet 4.5 | ~$270/month |

| Gemini 2.5 Pro | ~$168/month |

| DeepSeek V3.2 | ~$21/month |

Self-hosting on a consumer GPU like an RTX 4090 runs roughly $30-80/month in electricity after the initial hardware purchase. On a local Mac with Apple Silicon, power consumption is under $15/month. On a budget VPS, you are looking at $5-8/month for CPU-only inference.

The catch: self-hosted models are generally smaller and less capable than frontier API models. You are not replacing GPT-5 with a 7B model running on a $4 VPS. You are replacing it for specific tasks where a smaller model is good enough — drafting, summarization, code completion, local RAG, and casual conversation.

The Privacy Argument

Every prompt you send to an API leaves your network. For some workflows — medical notes, client data, proprietary code, legal documents — that is a non-starter regardless of the provider's privacy policy.

Self-hosted inference keeps everything local. Your prompts never leave your machine or your VPS. This is not a theoretical benefit: it is a compliance requirement for many teams.

The Learning Argument

Understanding how LLM inference actually works — model loading, quantization, context windows, memory management — makes you a better AI engineer. Self-hosting forces that understanding in a way that API calls never will.

We covered a similar philosophy in our guide to self-hosting your entire dev stack for under $20/month. Ollama fits perfectly into that same infrastructure-as-education mindset.

What Is Ollama + Open WebUI

Ollama: The Model Runtime

Ollama is an open-source tool that makes running LLMs locally as simple as ollama run llama3. It handles model downloading, quantization, GPU/CPU allocation, and exposes an OpenAI-compatible API at localhost:11434.

Key facts about Ollama in 2026:

- 52 million monthly downloads (Q1 2026) — 520x growth from Q1 2023

- 135,000+ GGUF models available on HuggingFace

- Runs on macOS (Apple Silicon), Linux, and Windows

- Exposes an OpenAI-compatible API, so existing code that talks to OpenAI can point at Ollama instead

- Default limit of ~4 parallel requests — designed for personal/small team use, not production multi-user deployments

Open WebUI: The Chat Interface

Open WebUI is a self-hosted web interface that connects to Ollama (or any OpenAI-compatible API) and gives you a ChatGPT-like experience in your browser.

What you get:

- Chat interface with conversation history and search

- Model switching (swap between models mid-conversation)

- Document upload and RAG (retrieval-augmented generation)

- Multi-user support with role-based access

- Prompt templates and system message customization

- Mobile-friendly responsive design

- Local file and image analysis

Together, Ollama + Open WebUI give you a private, self-hosted ChatGPT alternative that you fully control.

Path A: Local Setup on Mac/Linux (5-Minute Quickstart)

This is the fastest way to get running. No Docker required, no server configuration, no monthly bill.

Step 1: Install Ollama

macOS:

Download from ollama.com or use Homebrew:

brew install ollama

Linux:

curl -fsSL https://ollama.com/install.sh | sh

Step 2: Pull and Run a Model

# Pull a model (one-time download)

ollama pull llama3.2:8b

# Run it interactively

ollama run llama3.2:8b

That is it. You now have a local LLM running in your terminal. Type a prompt, get a response.

Step 3: Start the Ollama Server

For Open WebUI to connect, Ollama needs to run as a background server:

ollama serve

This starts the API on http://localhost:11434. You can verify it works:

curl http://localhost:11434/api/tags

Step 4: Install Open WebUI

The simplest method is Docker:

docker run -d \

-p 3000:8080 \

--add-host=host.docker.internal:host-gateway \

-v open-webui:/app/backend/data \

--name open-webui \

--restart always \

ghcr.io/open-webui/open-webui:main

Open http://localhost:3000 in your browser. Create an account (the first account becomes admin), and you will see your Ollama models ready to chat with.

Hardware Requirements for Local

How much can your machine handle? Here is a practical guide:

| Model Size | RAM/VRAM Needed (4-bit quantized) | Example Hardware | Speed |

|---|---|---|---|

| 3B (e.g., Llama 3.2 3B) | 2-3 GB | Any modern laptop | 40-60 tok/s |

| 7-8B (e.g., Llama 3.2 8B) | 4-6 GB | M1 Mac, RTX 3060 | 30-50 tok/s |

| 13-14B (e.g., Phi-4 14B) | 8-10 GB | M2 Pro, RTX 4060 Ti | 20-35 tok/s |

| 32B (e.g., Qwen 2.5 32B) | 16-20 GB | M3 Pro, RTX 4090 | 12-20 tok/s |

| 70B (e.g., Llama 3.3 70B) | 35-40 GB | M4 Max 128GB, dual 4090 | 7-12 tok/s |

Rule of thumb: roughly 0.5 GB of VRAM per billion parameters with 4-bit quantization. Full precision (FP16) doubles that requirement.

Apple Silicon Macs are particularly good for local LLM work because they share unified memory between CPU and GPU. An M2 Pro with 32 GB can comfortably run 32B models. An M4 Max with 128 GB can handle 70B models at 12 tokens/second.

Path B: VPS Deployment on Hetzner (~$5/month)

Not everyone wants to keep a laptop running 24/7. A VPS gives you always-on access from any device — your phone, a tablet, any browser.

The trade-off: VPS servers at this price point have no GPU, so inference is CPU-only. This means smaller models and slower generation. But for many use cases — quick questions, writing assistance, code review, document summarization — a 3B or 7B model on CPU is perfectly usable.

Why Hetzner

We use Hetzner for most of our self-hosted infrastructure at Effloow, as we described in our self-hosting dev stack guide. The reasons are the same here:

- CX23: 2 vCPU, 4 GB RAM, 40 GB SSD — €3.99/month (~$4.99/month)

- CX33: 4 vCPU, 8 GB RAM, 80 GB SSD — €6.49/month (~$8.09/month)

For the full Hetzner server lineup including GPU options, see our Hetzner Cloud for AI Projects guide.

- EU data centers (Falkenstein, Helsinki) for GDPR compliance

- Flat monthly pricing with no bandwidth surprises

The CX23 can run a 3B model with CPU inference. The CX33 handles 7-8B models. For larger models, you would need a dedicated server with more RAM, which pushes the cost above $20/month.

If you want to run these containers alongside other services (Gitea, Coolify, monitoring), check our comparison of Coolify vs Dokploy for managing deployments on a single server.

VPS Setup with Docker Compose

SSH into your Hetzner server and create a project directory:

mkdir -p ~/ollama-stack && cd ~/ollama-stack

Create docker-compose.yml:

version: "3.8"

services:

ollama:

image: ollama/ollama:latest

container_name: ollama

restart: unless-stopped

ports:

- "11434:11434"

volumes:

- ollama_data:/root/.ollama

environment:

- OLLAMA_NUM_PARALLEL=2

- OLLAMA_MAX_LOADED_MODELS=1

- OLLAMA_KEEP_ALIVE=10m

# Remove the deploy section if your VPS has no GPU

# deploy:

# resources:

# reservations:

# devices:

# - driver: nvidia

# count: all

# capabilities: [gpu]

open-webui:

image: ghcr.io/open-webui/open-webui:main

container_name: open-webui

restart: unless-stopped

ports:

- "3000:8080"

volumes:

- open_webui_data:/app/backend/data

environment:

- OLLAMA_BASE_URL=http://ollama:11434

- WEBUI_AUTH=true

- WEBUI_SECRET_KEY=change-this-to-a-random-string

depends_on:

- ollama

volumes:

ollama_data:

open_webui_data:

Start the stack:

docker compose up -d

Pull a model appropriate for your VPS:

# For CX23 (4 GB RAM) — use a 3B model

docker exec -it ollama ollama pull llama3.2:3b

# For CX33 (8 GB RAM) — you can try a 7B model

docker exec -it ollama ollama pull llama3.2:8b

Setting Up HTTPS with a Reverse Proxy

For remote access, you need HTTPS. The simplest approach is Caddy:

# Install Caddy

sudo apt install -y caddy

Edit /etc/caddy/Caddyfile:

chat.yourdomain.com {

reverse_proxy localhost:3000

}

Reload Caddy:

sudo systemctl reload caddy

Caddy handles SSL certificates automatically. Your Open WebUI is now accessible at https://chat.yourdomain.com.

VPS Performance Expectations

Be realistic about what CPU inference delivers:

| Model | VPS Spec | Speed (approx.) | Usability |

|---|---|---|---|

| Llama 3.2 3B | CX23 (2 vCPU, 4 GB) | ~3–6 tok/s | Slow but usable for short queries |

| Llama 3.2 8B | CX33 (4 vCPU, 8 GB) | ~1–3 tok/s | Noticeable delay, OK for async use |

| Qwen 2.5 3B | CX23 (2 vCPU, 4 GB) | ~3–6 tok/s | Good quality-to-speed ratio |

CPU inference is measured in single-digit tokens per second, not the 30-50 tok/s you get with a local GPU. This is fine for asynchronous workflows — ask a question, do something else, come back to the answer. It is not great for rapid-fire interactive chat.

Model Recommendations by Use Case

Choosing the right model matters more than choosing the right hardware. Here is what works well in Ollama as of early 2026:

For Coding

- Qwen 2.5 Coder 7B — Best balance of code quality and resource usage. Handles Python, JavaScript, TypeScript, Go, and Rust well. If you are comparing self-hosted coding models against paid alternatives, see our AI coding tools pricing breakdown for the full cost picture.

- DeepSeek Coder V2 (distilled) — Strong at multi-file reasoning and debugging. Needs more RAM.

- Llama 3.2 8B — Decent general coding, but specialized coding models outperform it.

For Writing and Chat

- Llama 3.3 70B — Best open-source general model if you have the hardware (40+ GB RAM).

- Qwen 2.5 32B — Excellent writing quality, 83.2% MMLU score. Needs 16-20 GB.

- Gemma 2 9B — Surprisingly good writing quality for its size. Runs on 6 GB.

For Resource-Constrained Environments (VPS/Old Laptop)

- Llama 3.2 3B — Solid general capability in a tiny package. Best first model to try.

- Qwen 3.5 7B — 76.8% MMLU, 3x faster than the 32B variant. Great quality-per-watt.

- Phi-4 14B — Microsoft's efficient model. Good for development workflows if you have 10 GB.

For Multilingual Use

- Qwen 2.5 series — Supports 29+ languages natively. Best option for non-English work.

Model Comparison Table

| Model | Size | RAM (Q4) | MMLU | Best For |

|---|---|---|---|---|

| Llama 3.2 3B | 2 GB | 3 GB | ~60% | Budget VPS, quick queries |

| Llama 3.2 8B | 4.5 GB | 6 GB | ~70% | Local dev, general chat |

| Qwen 2.5 Coder 7B | 4 GB | 6 GB | N/A | Code generation |

| Gemma 2 9B | 5 GB | 7 GB | ~72% | Writing, summarization |

| Phi-4 14B | 8 GB | 10 GB | ~76% | Development workflows |

| Qwen 2.5 32B | 18 GB | 20 GB | 83.2% | High-quality writing |

| Llama 3.3 70B | 35 GB | 40 GB | ~86% | Best open-source general |

All models listed use permissive licenses (Apache 2.0, MIT, or Llama Community License) that allow commercial use.

Open WebUI Features Worth Configuring

Once your stack is running, these settings improve the experience significantly:

System Prompts

Set default system prompts per model via Settings > Models. This lets you configure a coding assistant persona for your coding model and a writing assistant persona for your writing model.

Document Upload (RAG)

Open WebUI supports uploading PDFs, text files, and other documents for retrieval-augmented generation. Upload a document, and the model can answer questions about its contents. This works well with models 7B and above.

Multi-User Access

If you are deploying on a VPS for a small team, Open WebUI supports multiple user accounts with role-based access. The first registered user becomes admin and can invite others.

API Access

Open WebUI also exposes its own API, letting you programmatically interact with your models from scripts, CI pipelines, or other tools. Pair it with a self-hosted automation platform like n8n and you can build AI-powered workflows entirely on your own infrastructure — we compare the options in our Zapier vs Make vs n8n guide.

Performance Expectations: An Honest Assessment

Self-hosted LLMs have real limitations. Here is what to expect:

What Works Well

- Personal assistant for writing, brainstorming, and summarization — A 7-8B model handles these tasks surprisingly well.

- Code completion and review — Specialized coding models match or beat older GPT-3.5-level performance.

- Private document analysis — Upload sensitive documents and query them without data leaving your network.

- Learning and experimentation — Try different models, fine-tune for specific tasks, understand how LLMs work.

What Does Not Work Well

- Complex multi-step reasoning — Smaller models struggle with tasks that GPT-5 or Claude Opus handle easily.

- Multi-user production deployments — Ollama caps at ~4 parallel requests by default. It is designed for personal or small-team use.

- Speed-critical applications — CPU inference on a VPS delivers single-digit tokens per second. GPU inference on consumer hardware delivers 30-50 tok/s. API services deliver hundreds of tokens per second.

- Long context windows — Most open models cap at 8K-32K tokens. Frontier API models offer 128K-200K+ tokens.

Throughput Numbers

From recent benchmarks:

- Ollama: ~41 tokens/second (single user, GPU)

- llama.cpp (CPU): ~80 tokens/second (optimized build, good CPU)

- vLLM: ~793 tokens/second (production deployment, A100 GPU)

Ollama is not a production inference server. It is a personal/dev tool. If you need multi-user production inference, look at vLLM, TGI, or managed services.

When to Use API Services Instead

Self-hosting is not always the right choice. Use API services when:

- You need frontier intelligence. GPT-5, Claude Opus 4, and Gemini 2.5 Pro are significantly more capable than any model you can self-host. For complex reasoning, creative work, or tasks where quality is non-negotiable, APIs win.

- Your volume is low. Below ~2 million tokens per day, API services are almost always cheaper than self-hosting once you factor in infrastructure, maintenance, and your time.

- You need high concurrency. If multiple people need simultaneous access with low latency, API services handle this natively. Self-hosted Ollama does not.

- Uptime matters. API providers offer 99.9%+ uptime with automatic failover. Your Hetzner VPS does not.

The smart approach for many teams is hybrid: self-host for privacy-sensitive tasks and high-volume simple queries, use APIs for complex reasoning and peak demand. We explored this infrastructure thinking in our article about how we built Effloow with 14 AI agents — we use a mix of API and self-hosted tools depending on the task.

Our Experience at Effloow

We run Ollama internally for specific workflows:

- Draft generation — First drafts of articles and documentation where privacy is not critical but cost adds up at volume.

- Code review assistance — Quick code reviews and refactoring suggestions where a 7B coding model is sufficient.

- Local RAG — Querying internal documents without sending proprietary content to external APIs.

For production content that needs to be high quality — like the articles on this site — we still use frontier API models. The quality gap between a self-hosted 7B and Claude Opus 4 is real and significant for long-form writing.

The setup runs alongside our other self-hosted tools. If you are already running a VPS with Coolify or Dokploy (as described in our Coolify vs Dokploy comparison), adding Ollama is just another Docker Compose service. You can also use Dify for building AI workflows that connect to your local Ollama instance for visual prompt chaining and RAG pipelines. If you are already using Docker extensively, Docker Model Runner is an alternative worth evaluating — it integrates models directly into Docker Compose as first-class services.

Quick Reference: Getting Started in Under 10 Minutes

Local (Mac/Linux):

# 1. Install Ollama

brew install ollama # macOS

# curl -fsSL https://ollama.com/install.sh | sh # Linux

# 2. Pull a model

ollama pull llama3.2:8b

# 3. Start the server

ollama serve

# 4. Run Open WebUI

docker run -d -p 3000:8080 \

--add-host=host.docker.internal:host-gateway \

-v open-webui:/app/backend/data \

--name open-webui \

ghcr.io/open-webui/open-webui:main

# 5. Open http://localhost:3000

VPS (Hetzner):

# 1. SSH into your server

ssh root@your-server-ip

# 2. Install Docker

curl -fsSL https://get.docker.com | sh

# 3. Create docker-compose.yml (see VPS section above)

# 4. Start the stack

docker compose up -d

# 5. Pull a model

docker exec -it ollama ollama pull llama3.2:3b

Total time: 5-10 minutes. Total ongoing cost: $0 (local) or ~$5/month (VPS).

Conclusion

Self-hosting your own AI with Ollama and Open WebUI is no longer a weekend hack project. It is a legitimate, stable option for developers and small teams who want privacy, cost control, and the educational value of understanding how LLM inference works.

The stack is simple: Ollama runs models, Open WebUI provides the interface. Five minutes of setup on a local machine, or a Docker Compose file on a cheap VPS.

Will it replace ChatGPT Pro or Claude for complex work? No. But for the 80% of AI queries that do not need frontier intelligence — quick questions, drafting, code review, document analysis — it is free, private, and entirely under your control.

Start with a 3B model on whatever hardware you have. If you find yourself using it daily, upgrade to a bigger model or a dedicated VPS. The infrastructure scales with your needs, not your credit card. Use our AI Model Comparison Tool to compare available models before deciding which one to run locally.

Once you are comfortable running local models, the next step is connecting them to your own data. Our RAG tutorial with Python + LlamaIndex shows how to build a retrieval pipeline that uses Ollama for both embeddings and generation — keeping everything local and private.

Need content like this

for your blog?

We run AI-powered technical blogs. Start with a free 3-article pilot.